Great database performance starts with great database design.

Great database performance starts with great database design.

I have yet to meet someone to argue with that statement. I have, however, met many a database that has a less than optimal design. Sometimes this is due to the evolution of the database; it was built for one purpose and it is now being used for something different. Often times it is a result of the designer not being to anticipate the length of time the database will be in use, or the amount of data that the database will require.

Sometimes it is a result of the designer not knowing better.

One area I see overlooked in database design are with datatypes. I think most designers do not consider the implications of their choices. As long as the table can be created, that is where their concern ends. They then let the DBA worry about performance.

Here are three ways that inefficient datatypes will hurt you.

1. Inefficient Datatypes Require More Storage

I keep hearing all the time that “disk is cheap”. I won’t argue that the cost of storage has come way down. I will argue that just because it is cheap doesn’t mean it isn’t costing you in lots of ways you haven’t considered; backups and tape storage come to mind.

I once found a database table that had 400 billion rows and several columns defined as DATETIME. A quick review of the data showed that the SMALLDATETIME datatype would be sufficient and would save over 300GB in disk space!

That was a lot of extra space to be carrying around. Not just the data files, but the backup files as well, the space on tape and the extra time it was taking to save to tape, too.

And I haven’t even talked about the extra space needed for all of the indexes that we hope exist on that table, too! It is not uncommon to see the same column used in a handful of different indexes. That means that this inefficient piece of data is being stored many times, not just once.

2. Inefficient Datatypes Require More Memory

All those extra bytes don’t just take up space on disk, they also take up space inside of SQL Server’s buffer cache. The fewer number of bytes it takes to fit a row on a page, the fewer pages need to get brought into the buffer cache to satisfy a query, then the faster the query.

You might be apt to say “Hey, we got indexes in place to speed up the query”. Sure, but they are also using the inefficient datatype and are therefore also needing to load extra I/O into memory.

It’s that simple: fit more rows on a page then fewer pages need to be brought into memory.

3. Inefficient Datatypes Result In Decreased Performance

So, more disk storage results in more logical I/O, and that’s one way you will see decreased performance. Of course this will affect other queries, too, as you are filling your buffer cache with more pages than necessary. That means other queries that need different pages to be read into memory are more apt to be slower, too. But there is another hidden performance issue when it comes to datatypes: mismatches.

When your code doesn’t match the datatype in your table bad things can happen. Here’s an example for you using AdventureWorks2012, I will get set STATISTICS IO to be ON and will also grab the actual execution plan:

SET STATISTICS IO ON

GO

SELECT NationalIDNumber, LoginID

FROM HumanResources.Employee

WHERE NationalIDNumber = 112457891

GO

You should see the statistics output to be similar to the following:

Table 'Employee'. Scan count 1, logical reads 6, physical reads 0, read-ahead reads 0,

lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

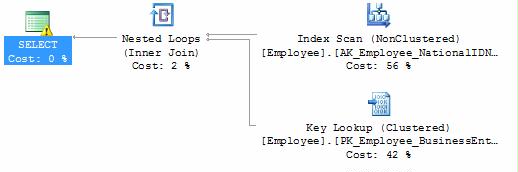

Here is the actual execution plan, does anything stand out to you here?

Yeah, there is a big warning sign. If you examine the properties for that icon a bit more you will find the following message:

Yeah, there is a big warning sign. If you examine the properties for that icon a bit more you will find the following message:

Type conversion in expression

(CONVERT_IMPLICIT(int,[AdventureWorks2012].[HumanResources].[Employee].[NationalIDNumber],0))

may affect "CardinalityEstimate" in query plan choice, Type conversion in expression

(CONVERT_IMPLICIT(int,[AdventureWorks2012].[HumanResources].[Employee].[NationalIDNumber],0)=[@1])

may affect "SeekPlan" in query plan choice, Type conversion in expression

(CONVERT_IMPLICIT(int,[AdventureWorks2012].[HumanResources].[Employee].[NationalIDNumber],0)=(112457891))

may affect "SeekPlan" in query plan choice

This means that because of an implicit conversion we were not able to use an index. OK, so how do we fix that problem? Simple, we help the optimizer to avoid the implicit conversion by giving it a more correct datatype as follows:

SET STATISTICS IO ON

GO

SELECT NationalIDNumber, LoginID

FROM HumanResources.Employee

WHERE NationalIDNumber = '112457891'

GO

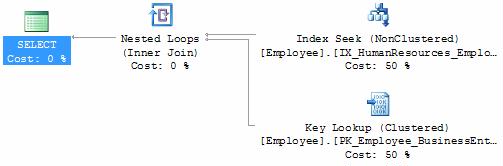

You will notice that we have fewer logical reads (for me it went from 6 to 4), and the actual execution plan will use an index seek (instead of an index scan) and the error message has disappeared as well:

How do you know if this type of implicit conversion is affecting your servers right now? You can scrub your plan cache looking for this info. Here is a great script from Jonathan Kehayias (blog | @SQLPoolboy) that will help you do just that.

How do you know if this type of implicit conversion is affecting your servers right now? You can scrub your plan cache looking for this info. Here is a great script from Jonathan Kehayias (blog | @SQLPoolboy) that will help you do just that.

Those are three ways that datataypes can hurt you. Tomorrow (Wednesday, August 29th, 2012) at 2PM EDT I am doing a webinar “Database Design: Size DOES Matter” where I will talk about the issues I see with database design, why datatypes matter, and possible solutions for you to work around the problem.

Godd Info Tom. Incorrect datatypes can also lead to deadlock errors.

http://sqlindian.com/2012/08/10/deadlock-due-to-implicit-conversion/

Hi,

I saw your ‘Size DOES Matter’ webinar yesterday. It was really good. When I talk about features

like snapshots/compression etc. I always point out sql edition to avoid disappointment/mails/questions.

Anyway – I noticed you used script that finds types with the shortest width that’s required to store data inside table and estimates impact/savings. Is it publicly available somewhere?

I also saw your webinar yesterday. I was wondering if there is a place to download the slides used during your presentation?

Here is a recording of yesterday’s webinar. http://www.confio.com/recorded-webinars/

I also would like a copy of the script Janusz mentioned. Thanks.

Ryan,

I can send you the slides, thanks for attending!

Janusz,

Thanks for attending. The script is not publicly available yet but I hope to have one soon.