I may be in the middle of a career makeover, but that does not excuse me from missing out on SQL University. This week we are going to talk about parallelism, an aspect of SQL Server that many DBAs have only a cursory amount of information.

tempdb

The first thing you need to do is to understand what parallelism is and why it happens. Adam Machanic (blog | twitter) has already provided you with a great background on this and I won’t bother trying to explain things in any more detail than he already has. But in my role as teacher/coach I have found the need to be able to explain things in various ways to make certain that everyone has an understanding. And at this level you need awareness of the topic so that if someone says the word ‘parallelism’ you don’t think they are referring to some aspect of literature.

Consider the story of the Manhattan Project. Inside of that project you had someone put together a plan, the plan was to be executed in parallel, and someone had to coordinate the output into a defined deliverable as an end result. Parallelism works in much the same way: you submit a query, something decides that the query is best run in parallel, and at the end the streams are gathered to produce an output that may or may not set off a chain reaction that will destroy the Earth.

msdb

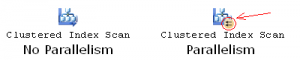

Once you have awareness of parallelism the next step is to recognize it when the opportunity arises. Chances are the first time you will see evidence of a parallel query is inside of an execution plan, and you will be able to distinguish it by a special icon as follows:

(You can find a full list of graphical execution plan icons at http://technet.microsoft.com/en-us/library/ms175913.aspx)

This special icon can show up on various pieces of your execution plan, you will need to be mindful of this when examining your plans.

Another way to find out about query parallelism is to use wait stats. You can see a list of wait types and their definitions over at http://technet.microsoft.com/en-us/library/ms179984.aspx. The specific wait type that you primarily want to look for is named CXPACKET, but there are other wait types that are related to parallel processing as well such as ASYNC_DISKPOOL_LOCK, CXROWSET_SYNC, EXCHANGE, EXECSYNC, and the ever popular QUERY_EXECUTION_INDEX_SORT_EVENT_OPEN (yeah, I had never heard of that one before today). The point here is that there is more than one way for you to be aware that statements are being run in parallel.

model

The real question you now face is whether or not parallelism is a bad thing for you or not. In others words, why should you even care about this topic? At the end of the day all anyone ever cares about is performance, and if performance is degraded as a result of parallelism then there are two options for you to use.

The first option that you should be aware of involves the use of sp_configure:

sp_configure 'show advanced options', 1; GO RECONFIGURE WITH OVERRIDE; GO sp_configure 'max degree of parallelism', 8; GO RECONFIGURE WITH OVERRIDE; GO

Note that this setting is for the instance as a whole. Also, keep in mind this information before you start playing around. In the past I have often set this configuration to be equal to the number of physical processors minus one. The reason behind this was that I always wanted to leave one CPU available to service other requests should a query decide to go parallel and consume all available CPUs at the same time. By limiting this at the instance level I can ensure a certain amount of stability for that instance.

Another option is to use the MAXDOP query hint. This query hint will override the sp_configure setting, so it would be possible for you to allow for more CPUs to run in parallel for your query and leave the server at a lower amount, or vice-versa. The hint goes at the end and looks something like this:

SELECT blah FROM blahblah OPTION (MAXDOP 1)

master

If you want to be at this level then you are going to be designing queries to either run in parallel or serially. What’s that? Oh yes, the optimizer is going to make the decision for you at run time, sure. But you already know that you can change the behavior by altering some configurations, right?

However, at this level, you will find yourself not settling for those configuration changes in order to force the engine to do what you want. Instead you will dive deep into the query to see if you can understand why the optimizer is deciding that a parallel plan is the best choice. In some cases this could be the result of poor indexing, or perhaps some fragmentation, or a host of other reasons as to why a parallel plan was chosen as the least expensive and ultimately selected as the one to use.

To be a master of parallelism you will need to start viewing queries with that level of discern.

resourcedb

You should start with Adam’s series for SQL University on parallelism. If that isn’t enough for you then head over to MSDN for more information. Craig Freedman’s blog is also a valued resource.

Interesting stuff. Couple of questions:

1. How does fragmentation affect the optimisers choice of a parallel plan or not?

2. How does it make sense to limit DOP to (#cores-1) in a co-operatively multi-tasked environment like SQLOS?

Paul

Paul,

Great questions. I’ll start with number two first.

It only makes sense (to me, at least) if you have been seeing problems with queries that are consuming all CPU’s as a result of parallelism *and* your instance still needs to service other requests at the same time. If you have a batch process that takes up 32 cores but no one else needs to use that box then you really don’t have an issue. But if you take all 32 cores and someone else is locked out as a result then chances are your phone will ring. To avoid that problem it *may* make sense for you to configure your instance to be N-1 by default.

My understanding of that is you could be fragmented to the point that the plan decides to do a scan rather than a seek, and the scan could also be kicked off in parallel. Now, the optimizer is always going to choose the plan with the least cost, so it is trying to do you what it thinks is a favor. So, you could see evidence of parallelism which is only there as a result of some fragmentation. If you clean up the fragmentation, you should get a different plan, and the optimizer may even decide that parallelism is not needed. So, it is not necessarily a direct correlation (fragmentation implies parallelism), but more of a cause and effect (fragmentation implies a less than optimal plan which implies parallelism is used). I guess you could look at this as a warning sign for you to look to a root cause rather than just changing some config options.

resourcedb is Craig Freedman’s blog archive, from which I learned much of the information I’m sharing in my series:

http://blogs.msdn.com/b/craigfr/archive/tags/parallelism/

Thanks Adam, I will add that to my original post.

Adam,

Yes! Craig’s blog is resourcedb for so many things. I particularly like his PDF presentation http://blogs.msdn.com/b/craigfr/archive/2007/04/17/parallel-query-execution-presentation.aspx

I was fortunate enough to make a small contribution to that.

His chapters in Inside SQL 2005 Query Tuning & Optimisation are also excellent.

Paul

Thomas,

Thanks for that. Server MAXDOP is controversial I know. My thoughts are that SQL Server generally load-balances between concurrent requests very well (co-operative scheduling) so cores-1 is not necessarily a good rule of thumb. Diminishing returns and NUMA concerns are so I tend to set MAXDOP 4 and tune on a case-by-case basis, except heavily-OLTP systems which get server MAXDOP 1 and OPTION overrides to that as required.

Fragmentation. The optimiser does not *directly* consider fragmentation at all – but it might make a different cost-based decision if an index happens to have more pages than it needs due to wasted space (page splits, low fillfactor etc).

Paul

Paul,

Thanks for the clarifications. I have only rarely needed to configure a system to be a specified number. I try to let the optimizer do the work for me instead.

Tom,

Recently one of my SQL server was showing the wait CXPACKET 1757062.70 wait time in ms.

what i did was changed the ‘max degree of parallelism’ to 4.I have taken value 4 cause the processor count was 8. Now my question is whether the above calculation is correct and also can we make default setting while building new SQL Server.

Shekhar,

Thanks for the well wishes.

As for the default setting for max degree of parallelism, the answer is: it depends. I would recommend that you examine the queries that are experiencing that particular wait type and see if they can be tuned.